Your Decision Factory’s Hidden Bottleneck

Invest in systems of action to balance Human + AI knowledge work production and consumption functions

Most companies think AI will make them smarter by producing more insight. That might only be half of the story. What if unbalanced AI adoption creates a denial of service attack on your decision infrastructure?

Roger Martin has described knowledge work organizations as decision factories. They take messy inputs and ship decisions as output. Meetings are the production line. Decks and memos are work-in-process. Rework looks like “let’s revisit this next week.”

AI dramatically lowers the cost of producing insight, which means we will ask for far more of it. The factory will fill with analysis.

Here is the operational risk: if you increase the throughput of one stage of a factory without increasing the throughput of the downstream stages, work-in-process piles up, coordination costs rise, and cycle time slows down.

That is the denial-of-service pattern. Not external. Self-inflicted.

For this post, I am using “insight” to mean any unit of analysis that could change a decision. A scenario run, a risk flag, a comparison table, a recommendation, a draft, a forecast.

AI could make many firms slower, not faster, unless they invest in insight consumption and systems of action as aggressively as they invest in insight production.

The decision factory, decoded

A helpful way to decompose most leadership decisions is:

Should we do this? Strategy

Can we do this? Constraints

How do we do this? Execution

Insights power each step, but in different ways. Strategy needs framing and tradeoffs. Constraints need validation and routing to the right experts. Execution needs translation into work, owners, and feedback loops.

AI changes the economics of generating the insights for all three steps. That is not the same as improving the decision factory’s ability to turn those insights into decisions and actions.

The rest of this post is a model that tries to make that mismatch visible.

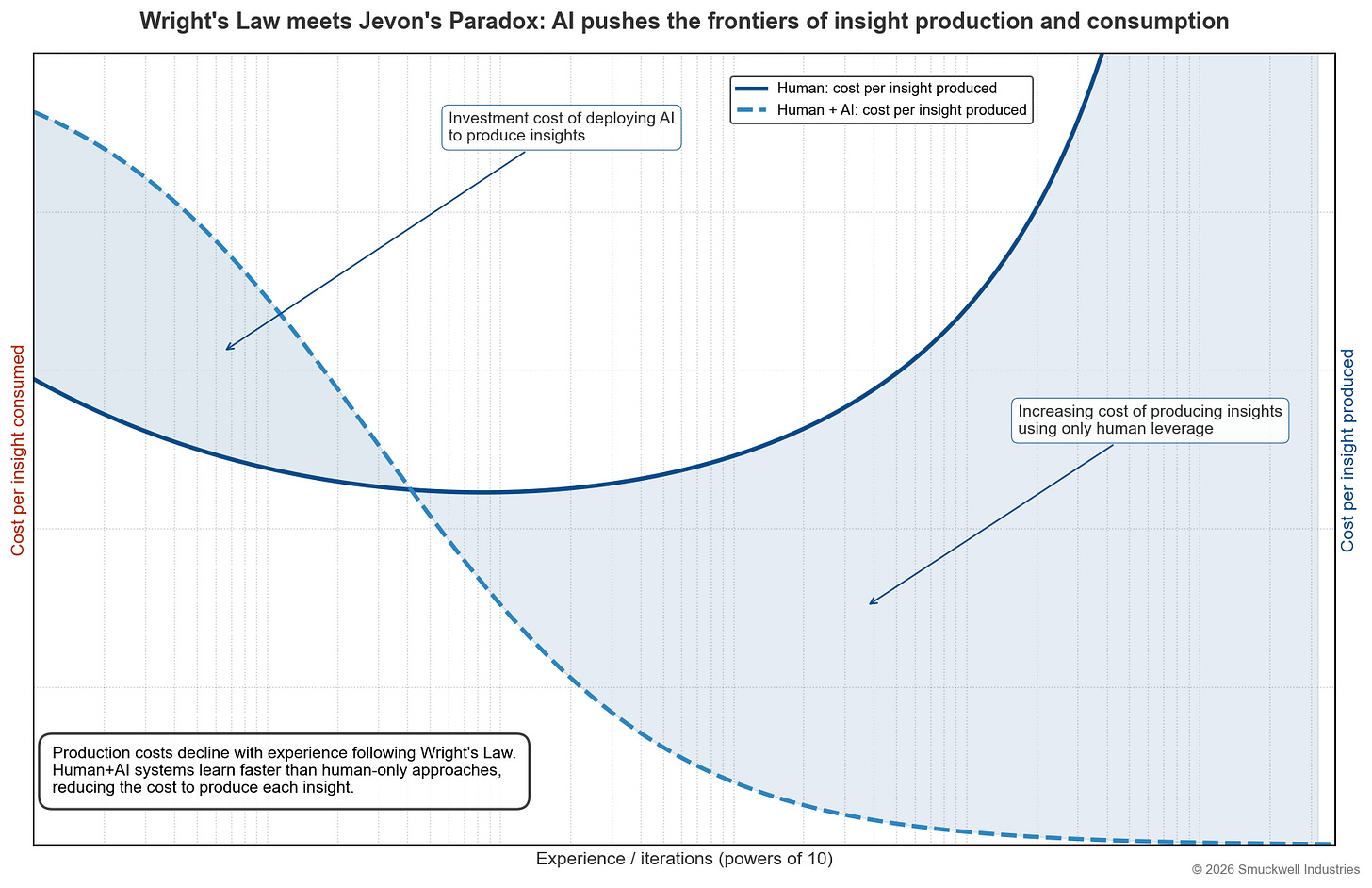

Figure 1: Production gets cheap, fast

In the first chart, I isolate the production side: cost per insight produced as experience grows.

Two ideas matter:

Human-only production has a smiling curve. It is expensive at cold start because everything is bespoke. It gets cheaper as people learn the domain and reuse patterns. Then it gets expensive again at scale, as cognitive capacity and coordination limits re-assert.

Human plus AI production starts more expensive because there are upfront investment costs: design, tooling, workflow integration, evaluation, and governance. But then it falls sharply with scale and trends toward a much lower long-run cost because machines have near-infinite capacity for analysis and coordination, and the system amortizes fixed costs.

This is where most AI-forward planning lives today: how do we get the production curve down.

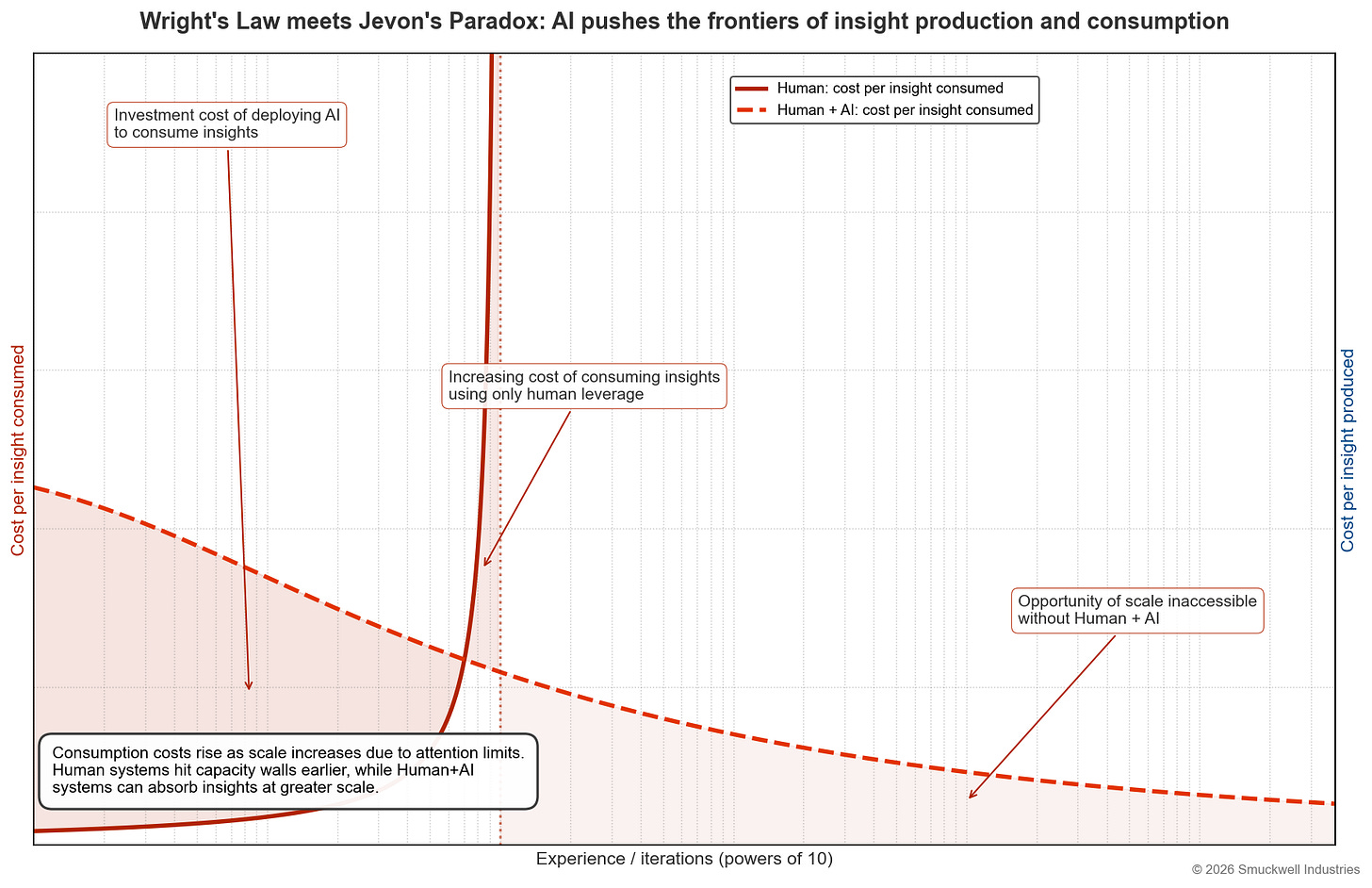

Figure 2: Consumption does not behave like production

The second chart isolates the consumption side: cost per insight consumed as experience grows.

This is the side most organizations do not model.

Human-only consumption has a hard limit. At some point, the cost of consuming insights rises rapidly, not because humans get worse, but because the system asks them to do more triage, more validation, more alignment, and more coordination per marginal unit of output.

This is what the “absorption wall” looks like in practice: more analysis creates more managerial work, which creates more coordination, which creates more delay, which creates more rework.

Human plus AI consumption can remain viable longer because machines can shoulder triage, compression, routing, and parts of verification. The result is not “humans removed,” but a consumption process that does not collapse under volume.

This chart forces an uncomfortable question: are we building a production engine that our organization cannot absorb?

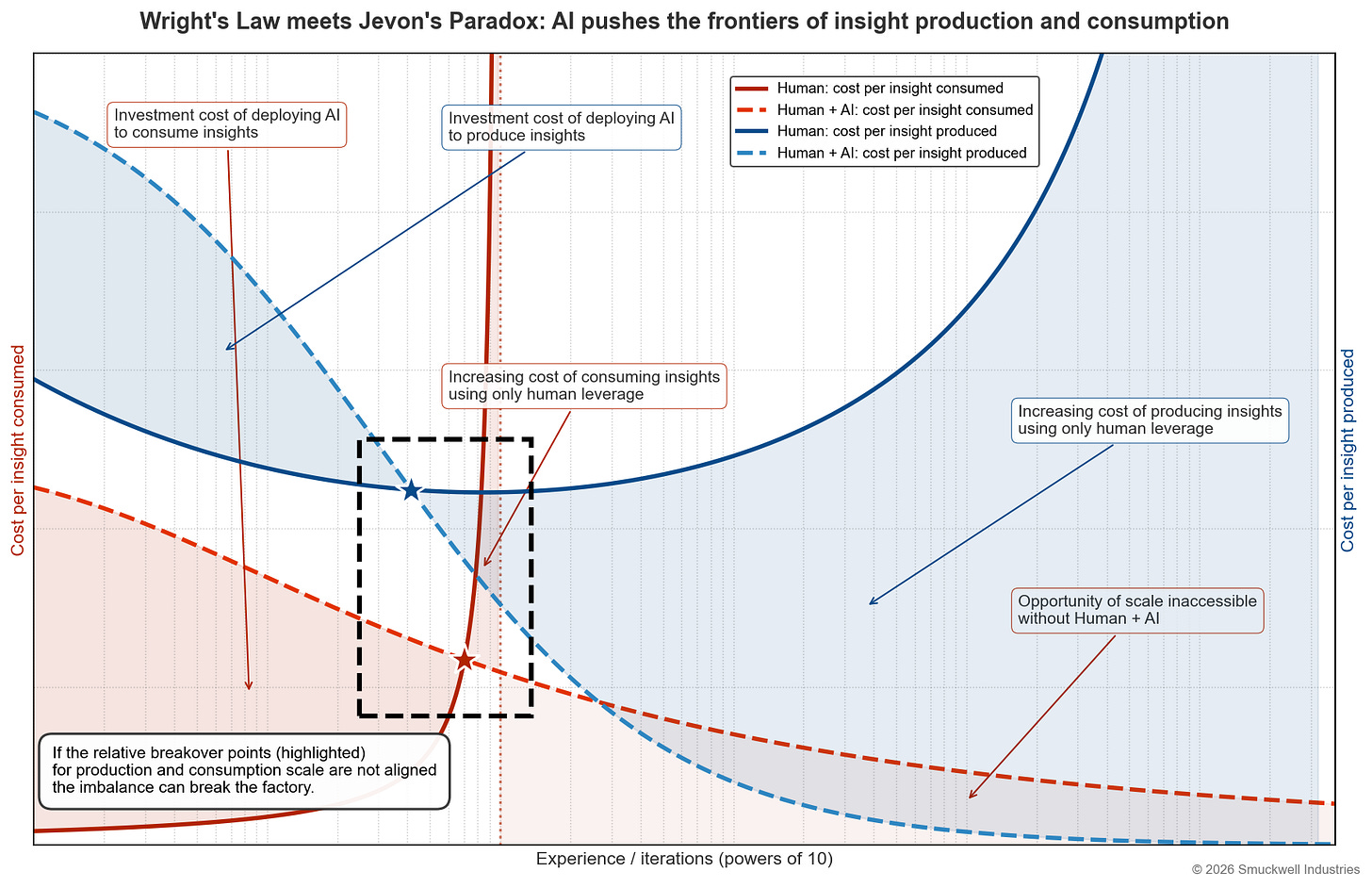

Figure 3: The composite reveals the breakover mismatch

The composite chart overlays production and consumption and highlights the regimes leaders should care about.

On the consumption side:

From low scale to the intersection, the gap represents the investment cost of deploying AI to consume insights.

From that intersection to the human-only consumption asymptote, the gap represents the increasing cost of consuming insights using only human leverage.

Beyond the asymptote, the area under the human plus AI curve represents opportunity of scale inaccessible without human plus AI. In practice, this is where the decision factory cannot keep up without machine-mediated consumption.

On the production side:

From low scale to the intersection, the gap represents the investment cost of deploying AI to produce insights.

Beyond the intersection, the gap represents the increasing cost of producing insights using only human leverage.

The key feature is the highlighted region: the breakover points for production and consumption do not have to line up.

If insight production becomes cheap before insight consumption capacity scales, the decision factory gets flooded. Outputs accumulate faster than humans can validate, align, and act. Work-in-process grows, coordination costs spike, and cycle time lengthens.

This is the internal denial of service pattern. It happens when the production breakover point sits to the left of the consumption breakover point.

Why this matters: planning for production without planning for consumption

Most firms treat AI as a production upgrade: more analysis, faster drafts, more scenario generation, more monitoring.

Wright-style learning makes production cheaper with repetition and tooling. Then Jevons kicks in: cheaper insight increases demand for insight. Teams ask more questions because they can.

Without deliberate redesign, the decision factory fills with work-in-process. You get:

more analysis that does not resolve uncertainty

more options that increase perceived risk

more stakeholders requesting more evidence

more meetings

more rework

slower decisions that feel more rigorous

This is the failure mode of the AI-forward firm that invests only in production.

The leadership move: build systems of action

If you want an AI-forward decision factory, you need a system of action that converts insight into decisions and decisions into changes in the world.

Most enterprises have systems of record (where facts live) and systems of insight (where analysis is displayed). What they lack is a system of action: orchestration that routes work, gates decisions, binds outputs to owners, and drives execution.

This is mostly consumption infrastructure.

Here are practical design moves that map cleanly to the three-step decision decomposition:

Should we do this?

Standard decision artifact with explicit tradeoffs

Evidence standards that clarify what would change the answer

Timeboxed decision windows

Decision rights that are explicit, so “alignment” does not become an endless meeting series

Can we do this?

Constraint routing workflows instead of meetings

Clear pass/fail criteria for legal, security, finance, capacity, brand

Provenance and auditability for high-risk constraints

A default policy for what gets escalated to humans versus handled by workflow

How do we do this?

Action compilation: owners, milestones, budgets, operating metrics

Automated handoffs into the execution systems (tickets, docs, comms, approvals)

Feedback loops tied to outcomes so new signals update the decision rather than spawn new decks

This is an absorption and execution strategy. It is how you keep insight supply from turning into coordination load.

The capital allocator move: invest where the bottleneck lives

Most investment flows to production because it is easy to demo. But durable advantage will come from consumption and action capacity:

triage and ranking

verification and provenance

orchestration and routing

auditability and governance

integrations that bind decisions to execution

The winners will not be the firms that generate the most insight. The winners will be the firms that convert insight into coordinated action with low rework.

Systems of action orchestrate work for scale

AI lowers the cost of producing insights. Organizations respond by demanding more insights. If consumption capacity does not scale, the decision factory slows down.

The opportunity is to redesign the factory so insight abundance translates into decision throughput.

That requires building systems of action that extend systems of record and insight. These complement the single-player AI tools we are putting in our people’s hands and help us orchestrate their work. This ensures the outputs of those tools accelerate our factories and avoids accidental denial of service attacks from the inside.

Brilliant framing on the consumptionbottleneck. I've seen this firsthand where teams drown in AI-generated reports that nobody acts on. The "denial-of-service" analogy is spot-on, especailly when production scales faster than decision capacity.